Large language models (LLMs) are all the rage, but what practical value can they bring to manufacturing SMEs? Let's explore some ideas that can make a real difference to your business.

TL;DR

Large language models are very useful in manufacturing. Here are three practical ways engineers can leverage LLMs to boost productivity:

- First, use LLMs as an assistant to automatically generate SQL queries and Excel macros.

- Second, build a Retrieval Augmented Generation (RAG) system to centralise and quickly access your company's knowledge base.

- Third, develop intelligent LLM agents that integrate additional capabilities (like executing Python scripts or processing images) to perform complex tasks.

Start small and experiment to transform your daily workflows.

Most of us will have used one by now.

From OpenAI's ChatGPT to Google's Gemini and many more besides, an LLM can help you with all sorts of language tasks; whether that's writing an email or suggesting a reasonable recipe for chilli con carne.

But what could we engineers be using them for in our day jobs? Can they be useful?

The answer is an emphatic yes.

Here we're going to talk about three ways you can incorporate this technology into your workplace.

1. Your Handy Assistant

Let's start with the simplest way you can use an LLM in your work; as an assistant who has knowledge in areas that complement yours.

As engineers, we often deal with databases and Excel spreadsheets to get at the information we need. However, if you're not an expert with SQL or VBA creating those queries and macros can become a tedious chore.

LLMs can generate these commands and scripts for you according to your specific requirements.

Typing a description of what you're looking for into the LLM for the first time and seeing it respond with a perfect query or macro is a revelation. More importantly, it can turbocharge your productivity and save you a lot of time!

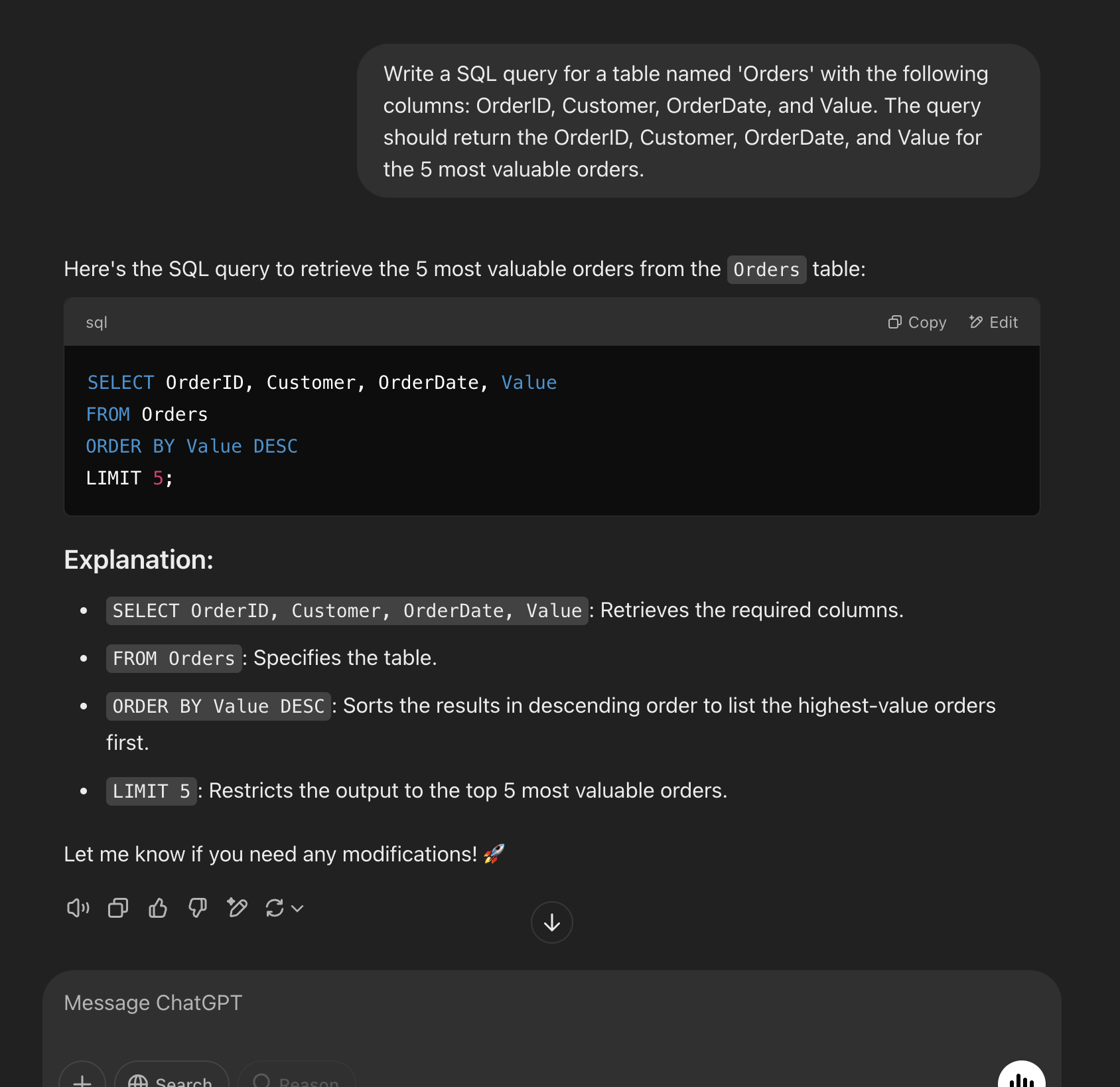

Using ChatGPT to generate a (very simple) SQL query

Now, this might be something you have tried already. However, what you might not have considered is whether using the standard offering from the likes of OpenAI and Google is suitable for your company.

Concerns about data security in your company can prevent you from using the online chatbots in a convenient way, and maybe not at all! In these cases, all is not lost. You can instead look into an on-premise, or local, solution. There are LLMs you can download such as LLaMA from Meta, Gemma from Google, Mistral from Mistral AI and more, that will run on local machines that you keep control of.

2. Leveraging your company's knowledge base

Next, let's up the complexity a bit.

Your company will probably have reams of paper documents and gigabytes of files containing operating manuals, process documents, production logs, and more. If you want to troubleshoot an issue or check how they did something five years ago, going through all of those pages to find the information you're looking for is a laborious and time-consuming process.

LLMs can save you countless hours spent searching through documents. However, simply asking ChatGPT about something an ex-colleague did in the past or a machine made bespoke for your company, won't get you very far!

Thankfully there is a solution.

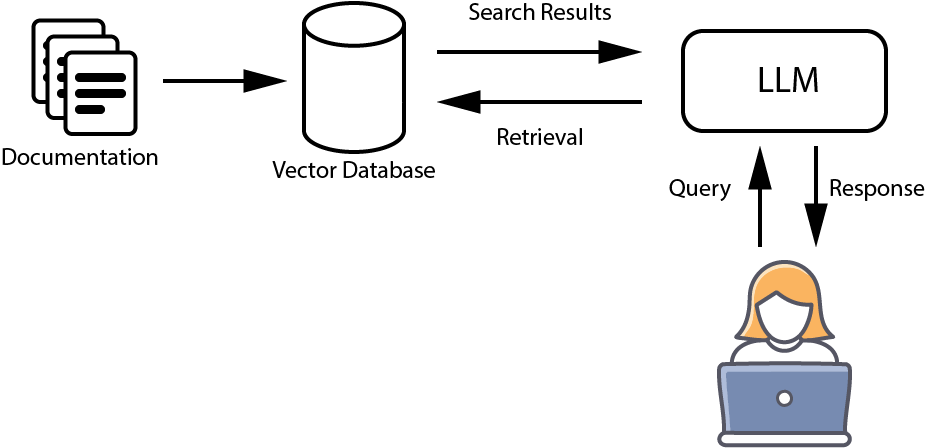

Retrieval Augmented Generation (RAG) is a powerful method for adding to the knowledge of a chatbot. In this way you can combine the natural language abilities of the LLM with the copious amounts of information accumulated by your company.

Simple diagram of a RAG system

Building a RAG application for your organisation is relatively simple:

- Data collection: firstly, identify and gather together all the information you think will be useful.

- Deal with any paper: For paper-based documents use optical character recognition (OCR) to digitise.

- Create a data store: RAG uses something called a vector database to store encoded versions of your documents (called embeddings).

- Build a pipeline: Create a simple process (e.g. using Python) that takes a user query, retrieves relevant information from your documents, and then generates a concise, informed response.

3. LLM Agents

Stepping it up another gear we come to intelligent agents.

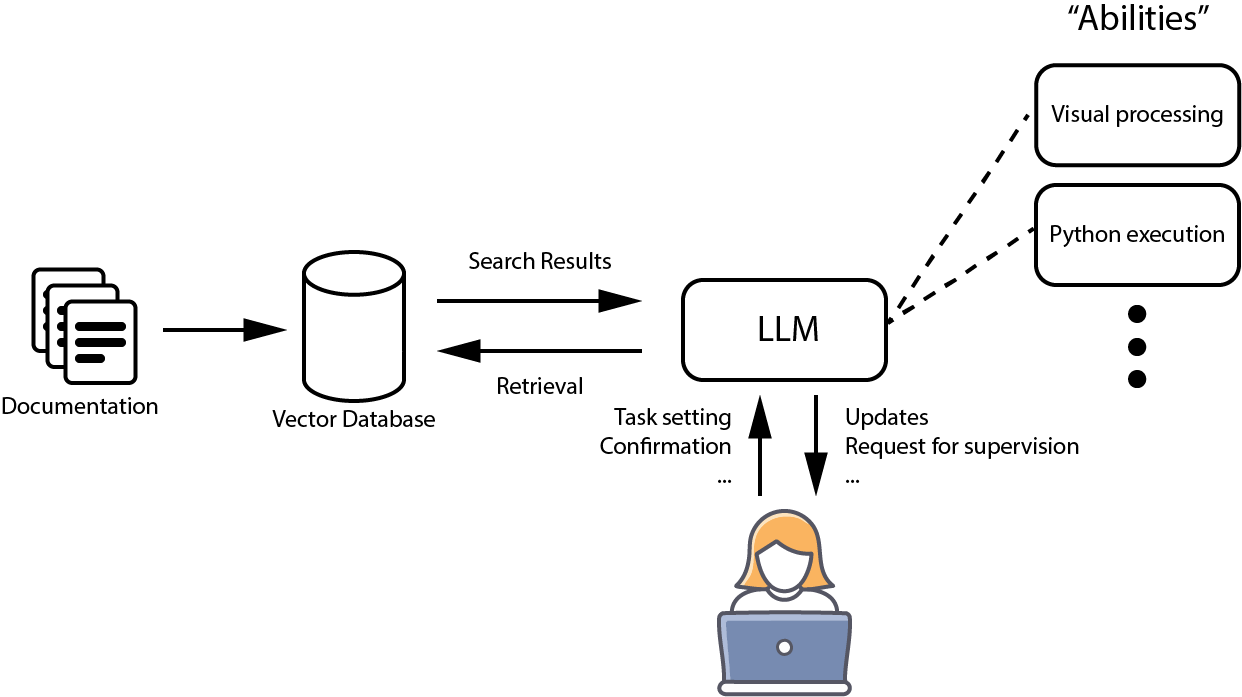

We know that LLMs can be augmented with knowledge like in RAG, but they can also be augmented with abilities!

For example, consider that LLMs are fantastic at language processing. However, what they sometimes struggle with are numbers. If we can somehow give the LLM the ability to "subcontract" out the calculation work, then we greatly magnify its abilities.

This is the principle of giving LLMs extra functionality that they can call on to achieve certain tasks; we usually call such systems "agents".

Simple diagram of a multi-modal agent

It doesn't stop at numerical calculations either. We can give them the ability to execute python scripts, browse the internet, interface with an MES, process visual images and much, much more.

Imagine asking, "What were the most productive machines last week?" The agent would analyse production logs, perform the necessary calculations, and return a clear answer.

Such a multi-modal agent is vastly more capable than a vanilla LLM.

Final Thoughts

Large language models are more than just conversation simulators! They are practical tools with a lot of value for engineers working in manufacturing and wider industry. By automating routine tasks like generating queries and macros, centralising your company's vast documentation through RAG systems, and building intelligent agents with additional capabilities, you can dramatically enhance your productivity and decision-making.

We've only scratched the surface of what LLMs can do when applied to manufacturing. The key is to start small and experiment. As you build confidence in these tools and secure quick wins, you'll be well positioned to demonstrate their potential to your organisation.

Want to learn more about implementing AI in your manufacturing operations?

Get in touch